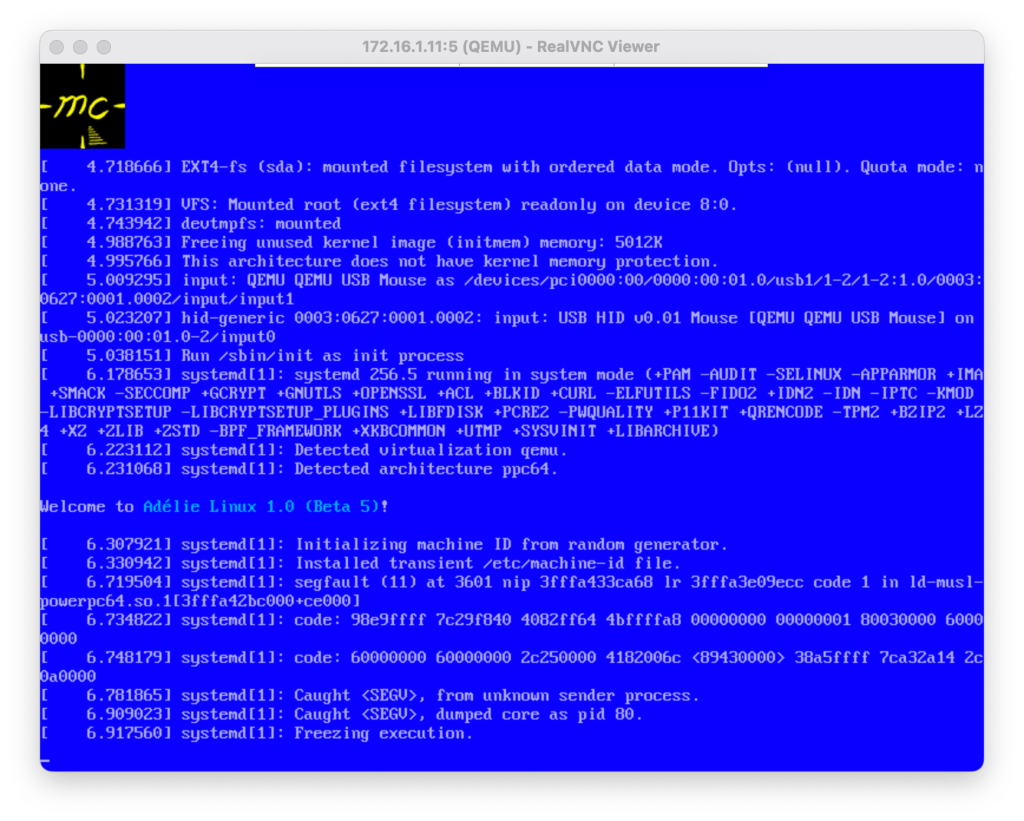

Hello, and welcome back to FOSS Fridays! One of the final preparations for the release of Adélie Linux 1.0-beta6 has been updating the graphical stack to support Wayland and the latest advancements in Linux graphics. This includes updating Mesa 3D. It’s been quite exciting doing the enablement work and seeing Wayfire and Sway running on a wide variety of computers in the Lab. And as part of our effort towards enabling Wayland everywhere, we have added a lot of support for Vulkan and SPIR-V. This has the side-effect of allowing us to build Mesa’s OpenCL support as well.

With Adélie Linux, as with every project we work on at WTI, we are proud to do our very best to offer the same feature set across all of our supported platforms. When we enable a feature, we work hard to enable it everywhere. Since OpenCL is now force-enabled by the Intel “Iris” Gallium driver, in addition to the Vulkan driver, I set off to ensure it was enabled everywhere.

Once again with LLVM

Mesa’s OpenCL support requires libclc, which is an LLVM plugin for OpenCL. This library in turn requires SPIRV-LLVM-Translator, which allows one to translate between LLVM IR and SPIR-V binaries. The Translator uses the familiar Lit test suite, like other components of LLVM. Unfortunately, there were a significant number of test failures on 64-bit PowerPC (big endian):

Total Discovered Tests: 821 Passed : 303 (36.91%) Failed : 510 (62.12%)

Further down the rabbit hole

Digging in, I noticed that we had actually skipped tests in glslang because it had one test failure on big endian platforms. And while digging in to that failure, I found that the base SPIRV-Tools package was not handling cross-endian binaries correctly. That is, when run on a big endian system, it would fail to validate and disassemble little endian binaries, and when run on a little endian system, it would fail to validate and disassemble big endian binaries.

I found an outstanding merge request from a year ago against SPIRV-Tools which claimed to fix some endian issues. Applying that to our SPIRV-Tools package allowed those tools to function correctly for me. I then turned back to glslang and determined that the standalone remap tool was simply reading in the binary and assuming it would always match the host’s endianness. I’ve written a patch and submitted it in my issue report, but I am not happy with it and hope to improve it before opening a merge request.

Back to the familiar

I began looking around at the failures in SPIRV-LLVM-Translator and noticed that a lot of them seemed to revolve around unsafe assumptions on endianness. There were a lot of errors of the form:

error: line 11: Invalid extended instruction import ‘nepOs.LC’

Note that this string is actually ‘OpenCL.s’, byte-swapped on a 32-bit stride. Researching the specification, it defines strings as:

A string is interpreted as a nul-terminated stream of characters. All string comparisons are case sensitive. The character set is Unicode in the UTF-8 encoding scheme. The UTF-8 octets (8-bit bytes) are packed four per word, following the little-endian convention (i.e., the first octet is in the lowest-order 8 bits of the word).

As an aside, I want to express my deep and earnest gratitude that standards bodies are still paying attention to endianness and ensuring their standards will work on the widest number of platforms. This is a very good thing, and the entire community of software engineering is better for it. This also serves as a great example of the wide scope in which we, as those engineers responsible for portability and maintainability, need to be aware and pay attention.

The standards language quoted above means that on a big endian system, it should actually be written to disk/memory as ‘nepOs.LC’. The Translator was not doing this encoding and therefore the binaries were not correct. I attempted to look at how the strings were serialised, and I believe I found the answer in lib/SPIRV/SPIRVStream.cpp, but it seemed like it would be a challenge to do things the correct way. I decided that for the moment, it would be enough to make the translator operate only on little endian SPIR-V files. After massaging the SPIRVStream.h file to swap when running on a big endian system, I significantly reduced the count of failing tests:

Total Discovered Tests: 821 Passed : 500 (60.90%) Failed : 313 (38.12%)

However, now we had some interesting looking errors:

test/DebugInfo/X86/static_member_array.ll:50:10: error: CHECK: expected string not found in input

; CHECK: DW_AT_count {{.*}} (0x04)

^

<stdin>:73:33: note: scanning from here

0x00000096: DW_TAG_subrange_type [10] (0x00000091)

^

<stdin>:76:2: note: possible intended match here

DW_AT_count [DW_FORM_data8] (0x0000000400000000)

^

You will note that the failure is that 0x04 != 0x04’0000’0000. This is what happens if you store a 32-bit value into a 64-bit pointer using bad casting, which is very similar to the Clang bug I found and fixed last month. On a hunch, I decided to look at all of the reinterpret_casts used in the translator’s code base, and I hit something that seemed promising. SPIRVValue::getValue, where they were doing equally questionable things with pointers, was amenable to a quick change:

#if __BYTE_ORDER__ == __ORDER_BIG_ENDIAN__

if (ValueSize > CopyBytes) {

if (ValueSize == 8 && CopyBytes == 4) {

uint8_t *foo = reinterpret_cast<uint8_t *>(&TheValue);

foo += 4;

std::memcpy(&foo, Words.data(), CopyBytes);

return TheValue;

}

assert(ValueSize == CopyBytes && "Oh no");

}

#endif

Unfortunately, this only fixed eight tests. The last time there was an endian bug in an LLVM component, it was somewhat easier to find because I could bisect the error. There was a version at which point it had worked in the past, so the change revealed where the error was hiding. Not so with this issue: it seems to have been present since the translator was first written.

Upstream has been apprised of this issue nearly a year ago, with no movement. The amount of time it would take to do a proper root cause analysis on a codebase of this size (65,000+ lines of LLVM plugin style C++) would be prohibitive for the time constraints on beta6. I’d like to work on it, but this is a low priority unless someone wants to contract us to work on it.

The true impact of non-portable code

There is no way to conditionalise a dependency on architecture in an abuild package recipe. This means even if we condition enabling OpenCL support in Mesa on x86_64, Mesa on all other platforms would still pull the broken translator as a build-time dependency. It is likely that libclc itself would not build properly with a broken translator, meaning Mesa’s dependency graph would be incomplete on every other architecture due to libclc as well.

Unfortunately, this means I had to make the difficult decision to disable OpenCL globally, including on x86_64. We never had OpenCL support, so this isn’t a great loss for our users, and we can always come back to it later. However, a few of Mesa’s drivers require OpenCL: namely, the Intel Vulkan driver, and the Intel “Iris” Gallium driver, which supports Broadwell and newer. On these systems, using the iGPU will fall back to software rendering only. We cannot offer hardware acceleration on these systems until we enable OpenCL, for reasons that are not entirely clear to me. It was possible before, and while I understand the performance enhancements with writing shaders this way, not providing a fallback option is really binding us here.

It is my sincere hope that software authors, both corporate and individual, begin to realise the importance of portability and maintainability. If the SPIRV-LLVM-Translator was written with more portability in mind, this wouldn’t be an issue. If the Intel driver in Mesa was written with fewer dependencies in mind, this wouldn’t be an issue. The future is bright if we can all work together to create good, clean code. I greatly look forward to that future.

Until then, at least software rendering should work on Broadwell and newer, right?